Ever since moving back to Orcas several years ago I have been looking for ways to connect to the internet.

The good news is today, in 2016, there are more ways than ever. But making sense of them all is difficult if you are not a tech geekhead (like me).

This post tries to shed light on the different options available to those on Orcas. I will try my hardest to make this easy to understand.

As with many other decisions in life, we weight our choices based on things like features and price, and we need to be able to see through all the marketing doubletalk. Internet connections are no different.

CenturyLink DSL

Even though you can get DSL through others (Rockisland/Orcas Online), it’s CenturyLink who is providing internet over the copper wire that you use for your phone.

Depending on where you live and how far you are from the local “remote”, this might be an option for you. It is probably what most of you already use for internet.

The remote is either the main office in Eastsound, or a box that is on the side of the road somewhere. Usually it’s within a mile or two (or three+!) from you.

If you are close enough to the remote, and it is connected to the rest of the world by a fiber optic cable, then you can probably get speeds of 6mbps or more download, and about 1/10 of that upload (see this page for an explanation of upload/download speeds). Otherwise you will only get about 1.5mbps download and about 1/10 of that upload.

In some locations even if you are close enough, CenturyLink will still not provide you access because too many people are already connected to that remote. Also if there are too many people connected to a remote, speeds for everyone suffer. This is called “oversubscribing”.

The good part of DSL is it’s cheap, and by bundling with your phone service, you probably only pay $10/month. The down side is sometimes it is not very reliable, and dealing with a huge company like CenturyLink when it doesn’t work can cause you to jump off a cliff!

Satellite

Many people on Orcas have a satellite dish on their house so they can watch TV. So, it seems like you should be able to get internet this was as well.

You can, but like with DSL there are some good and bad issues. The good is that the speeds are better than DSL. You still have slower upload than download though.

The bad is the cost is more than DSL. Also, because your signal goes from your house, out into space, and then back down to earth again, this thing called “latency” comes into play, and even though the speed is good, it takes a little bit before the speed starts happening. For example, you might click on a link on a webpage, and there will be this 2 or 3 (or more) second lag before the page comes back. But once it comes back it all downloads quickly.

One of the bigger downsides are usually “data caps”. This means if you consume too much of the internet, you either get cut off, or the speed goes down to really slow, or you get a huge bill at the end of the month.

Things that consume a lot of your internet connections are watching video, backing up your computer, uploading images to places like Facebook.

You might think that a data cap of 5GB (gigabytes) is a lot. But watching just one Netflix movie will probably blow through that.

Verizon/T-Mobile/AT&T

You might already have access to the internet over your mobile phone, and depending on where you are, you might have “LTE” coverage, and get speeds that are better than what DSL or Satellite can provide.

Also, some of these companies can provide you “Fixed Wireless LTE”, which is a little box that lives at your house that connects to the internet the same way your phone does.

Both of these options have the dreaded “data cap” like with Satellite, and either they will cut down your speed to a crawl when you use up your data, or they will cut you off, or they will send you a big bill.

Again, don’t let large data caps like 100GB fool you, you WILL consume it all, and you will pay them a LOT of money each month.

Mount Baker Cable

I don’t have much experience with this, but from what I know it’s not available everywhere, the speeds are ok, but not always consistent, the reliability is like DSL, and I think there might be data caps as well on this service.

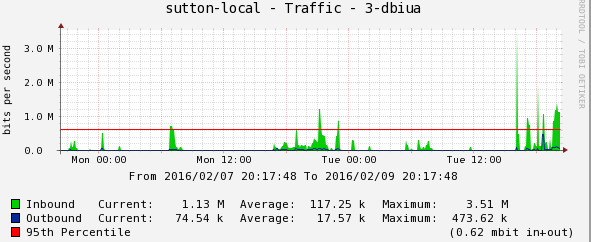

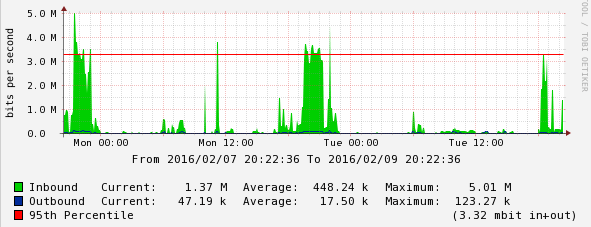

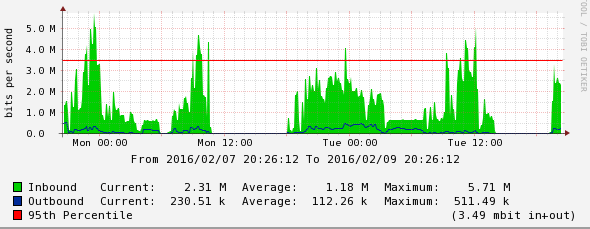

Wireless via Orcas Online or DBIUA

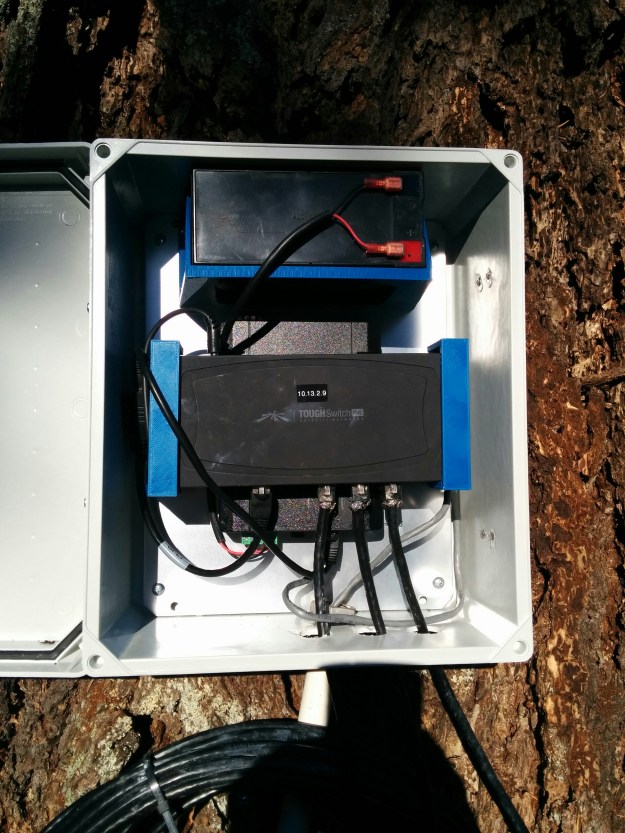

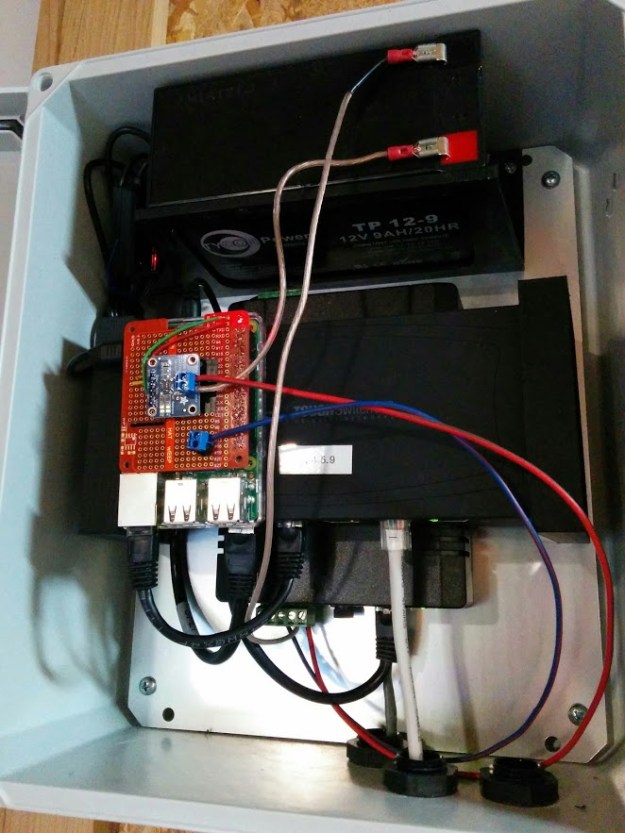

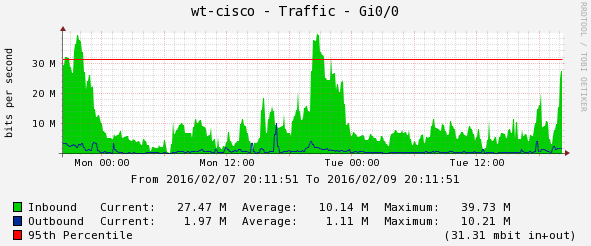

Orcas Online and DBIUA both provide access using small radio’s that run over public radio frequencies. These radio’s can provide speeds up to 50mbps (or more) both upload and download across long distances (10+ miles in some cases). These radios do require good line of sight, which means you can’t shoot through a bunch of trees.

The good parts of this option are the price is affordable, installation is fairly quick and easy, and speeds are better than DSL.

The downside is that because these radios use public frequencies, at times there can be interference that causes reliability issues.

Prices for Orcas Online vary depending on how fast you want to go. DBIUA is a flat fee, and provides whatever speeds it can to you, and the overall speed of the system is shared with everyone (this is a very uncommon model).

This wireless option is a shared system, so that if several people are connecting to the same upstream radio, they all share the available speed. So not everyone can get 50mbps all at the same time.

Access to this type of connection depends on where you are located. DBIUA is obviously only on the Doe Bay Area, but Orcas Online has links and relay points all around Orcas and on other islands as well. You just need to be able to see the correct access point.

Startouch Broadband

Startouch is based in Bellingham and provides commercial microwave connections across the state. These are licensed FCC links and are expensive to install ($10,000+), but there is no interference issues. They are also capable of very fast speeds. The Orcas public school and library both have these types of connection from Startouch. Notice the large dish on the roof of the library.

The DBIUA also uses one of these links for its upstream connection to the Internet.

Startouch also provides wireless business service at slower speeds and lower costs using radios like Orcas Online and DBIUA.

The requirement to use Startouch is being able to see one of their towers which means Mt. Constitution or towers on the mainland.

OPALCO/Rockisland

Before Rockisland was purchased by OPALCO, they provided similar wireless services that Orcas Online and DBIUA provide. But after being purchased by OPALCO, they seem to not be offering these services. The push now is to bring fiber to your home, or at least those that want to pay for it.

Internet over fiber is very fast and reliable. Speeds are the same both upload and download. But it’s very expensive to get to you. Even if you are less than 100′ away from the backbone it’s still going to cost over $1,000 to hookup. And it takes a LOT of time and effort to get installed because they need to trench all the way to your house.

The other option they are selling is “Fixed Wireless LTE” like the mobile phone providers. Except with no data caps! The monthly cost is about the same a fiber, and the speeds are similar to other wireless options. Installation cost is very affordable ($0?).

This is available now in areas where they have put up their tall poles with cell equipment on them, like at the Eastsound office, and at the Olga substation. Their plan is to put these poles all over the county to improve communication with their linemen, as well as provide internet to people. They have also partnered with T-Mobile to allow them access to this equipment for mobile phone service.

Rockisland’s message is that this wireless option is available for areas where fiber is hard to reach or very expensive. But in my opinion there is no technical reason why everyone should not be able to get this and be forced to pay for fiber.

Summary

There are many different options available today for internet access on Orcas. In some locations right now you may only have one available to you.

But as OPALCO builds out their LTE Wireless network, and as Orcas Online continues to expand their network, and others learn how to reproduced the DBIUA model we will have even more options in the future.